When it really comes down to it, the wild success of mathematics in describing physical concepts is what makes it matter so much. The mythologies and folk legends that have sprung up around the history of science tell us that it was mathematics that led us out of our ignorant state, that it was quantitative measurement that allowed us to understand our Universe and wrench control out of the hands of those who would keep us from that understanding, that if mathematics is liberating it must be a tool we can use to liberate all knowledge.

This is perhaps more than a little unfair on all but the most devoted fans of scientism, but there is a nugget of truth in there. Because mathematics has been so successful in (some of) the physical sciences (some of the time), people argue that if we use more quantitative methods in research, that research will become better – whatever “better” really means.

The idea that more maths makes for better science is a seductive one. It’s also one that becomes less and less convincing the more you think about it.

Brief sketch of a killjoy

You might be wondering what qualifies me to write this essay. Telling a maths-loving audience that maybe not all knowledge is improved by maths is provocative. If I’m doing this, I’d better have some very good experience.

And I do have good experience: specifically, I’ve got an uncommon breadth of experience. I started my academic life as a physicist, working on optics (I worked on building an easy-to-use Raman spectroscopy experiment, which put very briefly involves shining laser light on a material and detecting ways in which the laser light changes to work out information about the material, for our undergraduate teaching labs; it was much more aimed towards education than research, but it was still physics) and having to learn a lot of maths along the way. It was my physics training that convinced me of how important mathematics is to the physical sciences, how deeply it underpins a physical understanding of the world. I am not here to argue that mathematics is bad, only that it can be misused.

I switched disciplines and studied science communication, which as an academic field is far closer to the social sciences and humanities than it is to the physical sciences. I did qualitative research, less because I was intent on betraying my quantitative roots as a physicist and more because I believed that trying to capture what I wanted to capture quantitatively would have made my research worse. (More on this, and why quantitative research could ever be worse than qualitative research, later on.)

Now I study the history of space science, using a mixture of oral history and material culture approaches; put slightly more simply, I talk to people and study bits of spacecraft. That’s heavily qualitative, to the point where approaches based on interpreting bits of text probably won’t work (at least not on the material culture part, anyway, because they’re bits of spacecraft and not text).

You could argue that since I was an experimental physicist and left the field before doing a PhD, I’m not a “real” physicist and am more closely affiliated with social sciences and humanities fields – not “real” sciences and certainly not ones with the authority to say “maths isn’t always right”. Equally, stepping outside my original field and working in more qualitative disciplines has shown me cases where it would be flat-out wrong to use a quantitative approach, or even where quantitative approaches would harm my research.

(Of course, you could argue that non-quantitative research isn’t worth doing. That’s certainly a position you can take. Since some human and animal behaviour isn’t necessarily best captured by quantitative research, it’s up to you whether you want to write off understanding how and why people and animals do what we do.)

Enough about me, though: let’s talk about maths.

The reasonable ineffectiveness of mathematics

The first case I’ve been angling at making is that sometimes, mathematical tools are the wrong ones to be using.

Sometimes, mathematical tools go right – very right. Physics is a great example of this: by quantifying our observations and manipulating the quantities, we’ve learned incredible amounts about our Universe.

On the other hand, let’s say you want to find out what people enjoy about playing video games. For a higher-stakes example, let’s say you might want to find out about some of the reasons why they don’t vaccinate their children. People can’t easily quantify what they like or dislike; sometimes they can’t even tell you plainly in a conversation!

If you genuinely, truly have no idea why someone is acting the way they are, and you have no theoretical model for why they act the way they do, sometimes sitting down and asking them is the best way to find out. Even if that means collecting qualitative data. If you go straight into collecting quantitative data, without knowing why people say they do what they do, you’re not going to get good data, even if that data nominally looks better because it’s quantitative.

You have to pick the right methods to do your research. The right methods won’t always be the most quantitative ones (or the most qualitative ones). They will be specific to your research questions.

Hail the scale

But let’s say you have enough information about what people find enjoyable about video games, or enough information about some of the major themes that crop up when parents aren’t sure about vaccinating their children. Surely then you could attempt to quantify some of that information?

Well, people do. You can ask people to quantify how much they agree or disagree with a given statement, how much pain they’re in, how funny they think something is…

…Mostly the people who do this are psychologists. I’m not a psychologist, so I’ll try not to overstep my disciplinary boundaries here. That said, there are problems with asking people to put numbers on things. One of the more pressing ones is that people don’t always report things accurately when you ask them things (though of course, this is also a problem with trying to get qualitative data out of people). Broadly speaking, this is known as response bias. There are various methods you can use to reduce or account for different types of response bias, fortunately.

When all you’ve got is a hammer…

…not everything is a nail.

Sometimes you have great maths, powerful maths, proven maths. If you take that great, powerful, proven maths and apply it anywhere, it’ll unlock the secrets of the universe.

Except for when it doesn’t and it gives you nonsensical results instead, because you can’t just apply any given equation to any research question and assume it holds.

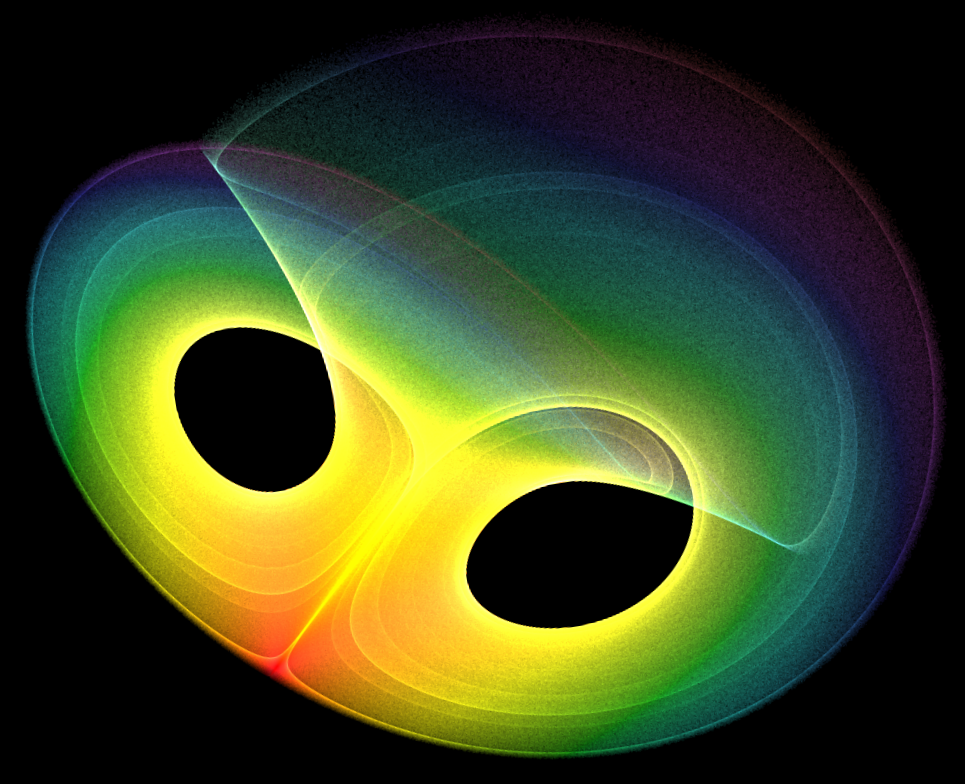

It might seem ridiculous, but people have done this. An influential psychology paper that claimed a ratio of 3 positive interactions to 1 negative interaction was based on the Lorenz equations from fluid dynamics. They look like this:

Very broadly speaking, these equations describe how the rate of convection and the temperature of a fluid can change over time. They do this very well. This article from HowStuffWorks goes into more detail; if you want to dive into the technicalities of nonlinear dynamics and don’t mind hearing about Hopf bifurcations or Lyapunov exponents, this lecture explains the Lorenz equations beautifully.

The Lorenz equations are an example of a set of differential equations. Just as the Lorenz equations describe how fluids change over time, differential equations tell you how a given quantity (temperature, mass, length, force…) changes over time, or space, or whatever you can think of. In other words: differential equations are powerful if you can sensibly apply them to any given scenario.

The catch here is applying them sensibly. Explaining what “sensibly” means in a bit more detail, this works out as:

- Identifying and precisely defining the given quantities you want to study

- Justifying why those quantities don’t change on their own

- Justifying why those quantities should change based on an equation at all

- Justifying why that equation should be a differential equation, anyway

- Working out the precise form of the differential equations

The researchers who first wrote that paper didn’t apply the differential equations sensibly. They took a model and used it completely inappropriately. So, even though the maths they used is well-established, because they weren’t using it in the right context their results are absolutely meaningless.

This is what happens when you use maths without knowing how to apply it sensibly: you get bad science and bad data. And maybe it seems laughable, maybe it seems like it’s a bit of harmless fun, but that bad science is still out there. It’s made its way into avenues from popular self-help books to academic articles and is affecting millions of people around the world.

It matters that quantitative methods aren’t always better, because good research matters and impacts the world. Getting things wrong hurts people, badly.

So having more maths and quantitative methods don’t make things better – but knowing what questions to ask and how to best answer them does. The best research methods aren’t better because they have more maths, they’re better because they allow people to answer their research questions better.

That might sound confusing, even tautological. That’s fine! Research is tough. There are no simple answers, no simple ways to make things make more sense. That’s integral to answering questions about our Universe. Just as mathematics still helps us to understand more about the world around us, research in general will help us to understand more about what works and what doesn’t.